Start page

CIN25 - Colorization in ImageNet

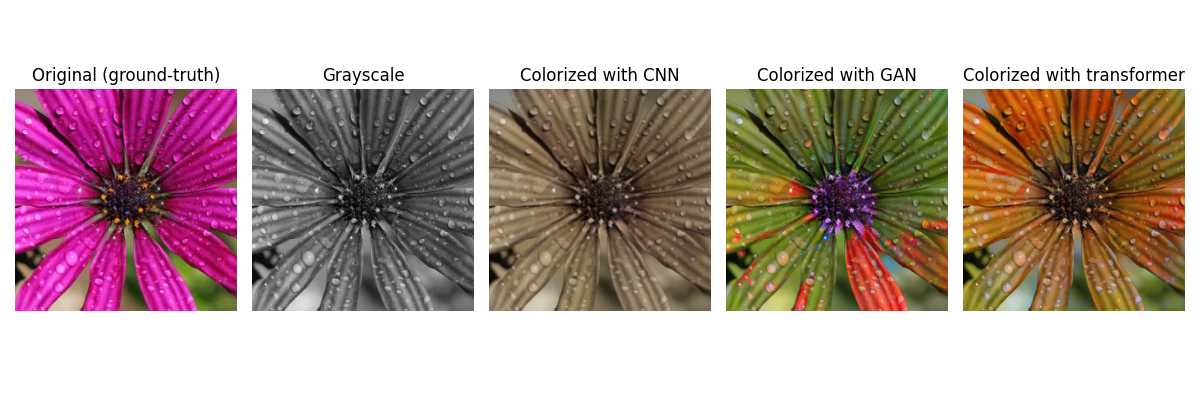

The focus of automatic image colorization is to generate plausible and semantically consistent color representations from grayscale inputs. Despite significant progress driven by deep learning, the evaluation of colorization quality remains a challenging open problem. Colorization is inherently ill-posed, i.e., multiple valid color solutions may exist for a single grayscale image. Perceptual quality depends strongly on semantic correctness and visual realism. To better understand and evaluate the behavior of objective quality metrics under controlled semantic conditions, we constructed an evaluation dataset based on images from the ILSVRC2012 ImageNet test set [1]. The dataset is designed to capture a diverse range of visual content. It contains 25 distinct scenes selected to cover a wide spectrum of semantic categories, including objects, people, animals, indoor and outdoor environments, as well as both daytime and nighttime conditions. This diversity ensures that evaluation is not biased toward a specific type of content and reflects realistic challenges encountered in image colorization tasks.

Each scene is represented by:

- original image - reference color image,

- grayscale image - input used for colorization,

- CNN colorization - output of a CNN-based method,

- GAN colorization - output of a GAN-based method,

- transformer colorization - output of a transformer-based method.

By including multiple colorized versions of the same scene generated by multiple representative models (CNN-based [2], GAN-based [3-5], and transformer-based methods [6]; implementations adapted from [7]), the dataset enables systematic assessment of color naturalness and perceptual consistency across different color interpretations.

Images are organized into three folders:

- original_color_images - contains all ground-truth color images,

- grayscale_images - contains grayscale versions of the images,

- colorized_images - contains all colorized outputs.

Images are named according to the following scheme: ID_method.extension, where:

- ID denotes a three-digit identifier aligned with the ILSVRC2012 test set,

- method - indicates the image type:

- orig - original image,

- gray - grayscale image,

- cnn - CNN-based colorization,

- gan - GAN-based colorization,

- tf - transformer-based colorization.

All images have a spatial resolution of 256x256 pixels.

In addition to the image data, the dataset includes both raw subjective ratings and a processed mean opinion score (MOS) file. The raw file contains individual user evaluations, enabling further analysis or alternative processing strategies. The MOS values are computed from these ratings after applying a data cleaning procedure, including outlier detection, consistency checks, and removal of unreliable participants. Individual scores are then normalized using per-user z-score normalization to account for differences in scoring behavior, and the final MOS for each image is obtained by averaging the normalized ratings across all valid users. This provides a reliable measure of perceived color naturalness for each colorized image.

References

- J. Deng, W. Dong, R. Socher, L. -J. Li, Kai Li and Li Fei-Fei, "ImageNet: A large-scale hierarchical image database," 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 2009, pp. 248-255, doi: 10.1109/CVPR.2009.5206848.

- S. Iizuka, E. Simo-Serra and H. Ishikawa, "Let there be color! joint end-to-end learning of global and local image priors for automatic image colorization with simultaneous classification," ACM Trans. Graph. 35, 4, Article 110, 2016, doi: 10.1145/2897824.2925974.

- P. Isola, J. -Y. Zhu, T. Zhou and A. A. Efros, "Image-to-image translation with conditional adversarial networks," 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 2017, pp. 5967-5976, doi: 10.1109/CVPR.2017.632.

- K. Nazeri, E. Ng and M. Ebrahimi, "Image colorization using generative adversarial networks," In: Perales, F., Kittler, J. (eds) Articulated Motion and Deformable Objects. AMDO 2018. Lecture Notes in Computer Science(), vol 10945. Springer, Cham, doi: 10.1007/978-3-319-94544-6_9.

- L. Kiani, M. Saeed and H. Nezamabadi-pour, "Image colorization using generative adversarial networks and transfer learning," 2020 International Conference on Machine Vision and Image Processing (MVIP), Iran, 2020, pp. 1-6, doi: 10.1109/MVIP49855.2020.9116882.

- M. Kumar, D. Weissenborn and N. Kalchbrenner, "Colorization transformer," 2021, ArXiv, abs/2102.04432.

- I. Šetka, "Image colorization methods based on generative deep learning," M.S. thesis, Department of Communication and Space Technologies, University of Zagreb Faculty of Electrical Engineering and Computing, Croatia, 2025, https://urn.nsk.hr/urn:nbn:hr:168:532664

CIN25 release agreement

The CIN25 image dataset can be used under the following terms:

- All documents and papers that report research results obtained using the CIN25 will acknowledge the use of the CCIN25 image dataset.

Please cite the following paper:

I. Zeger, S. Grgic “???,” ???, vol. ???, 2026, pp. ???–???.

- While every effort has been made to ensure accuracy, CIN25 owners cannot accept responsibility for errors or omissions.

- Use of CIN25 is free of charge.

- CIN25 owners reserve the right to revise, amend, alter or delete the information provided herein at any time, but shall not be responsible for or liable in respect of any such revisions, amendments, alterations or deletions.